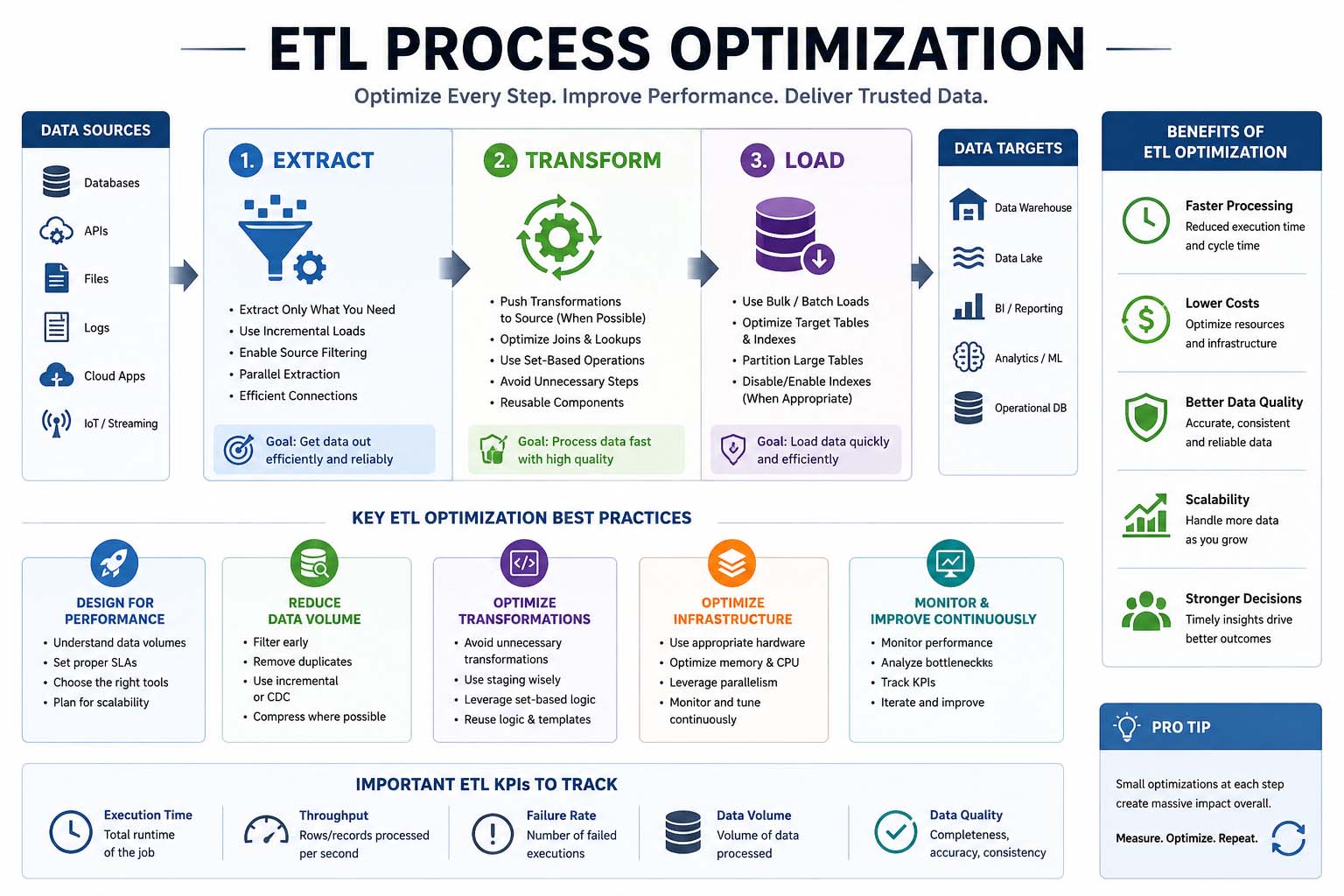

In today’s digital environment, businesses rely heavily on data to make better decisions, improve customer experiences, and streamline operations. One of the most important parts of managing business data is the ETL process. ETL stands for Extract, Transform, and Load, which is the method used to collect data from multiple sources, convert it into a usable format, and load it into a data warehouse or database. ETL process optimization is essential because slow or inefficient data pipelines can reduce productivity, increase costs, and affect business intelligence reporting.

workflows can become slow, consume excessive resources, and create delays in analytics systems. Optimizing ETL processes helps businesses improve speed, maintain data quality, and ensure reliable reporting.

Understanding ETL Process Optimization

ETL process optimization refers to improving the efficiency, reliability, and speed of ETL workflows. The goal is to reduce processing time, minimize errors, and maximize system performance. Businesses that rely on large datasets often face issues such as long loading times, duplicate records, and inconsistent data formatting. Optimization techniques solve these problems and create a smoother data integration process.

An optimized ETL pipeline ensures that data moves quickly between systems without causing bottlenecks. This is especially important for industries like finance, healthcare, retail, and eCommerce, where real-time insights are critical for decision-making.

Why ETL Optimization Matters

Organizations use ETL systems to support analytics platforms, reporting tools, and machine learning applications. If the ETL process is slow, reports may contain outdated information, which can negatively affect business decisions. ETL process optimization improves performance and ensures that accurate data is available when needed.

Another important reason for optimization is cost reduction. Inefficient ETL workflows consume additional storage, memory, and processing power. By improving the process, businesses can lower infrastructure costs while increasing productivity.

Optimized ETL systems also improve scalability. As businesses grow, the amount of data they handle increases rapidly. A scalable ETL process can manage larger datasets without performance degradation.

Common Challenges in ETL Processes

Many businesses experience challenges when managing ETL pipelines. One of the most common issues is handling large volumes of data. As databases grow, extraction and transformation tasks take longer to complete. This can create delays in reporting systems and analytics dashboards.

Data quality is another major challenge. Inconsistent formatting, duplicate entries, and missing values can affect the accuracy of reports. ETL optimization helps maintain clean and reliable datasets.

Network latency and slow database queries also impact ETL performance. If systems are not properly optimized, transferring data between servers may take significant time. This can reduce overall efficiency and create workflow bottlenecks.

Best Practices for ETL Process Optimization

There are several effective strategies businesses can use to optimize ETL workflows. One of the most important practices is incremental loading. Instead of processing the entire dataset every time, incremental loading transfers only new or updated records. This significantly reduces processing time and resource consumption.

Another important method is parallel processing. Running multiple ETL tasks simultaneously can improve performance and reduce workflow delays. Parallel execution is especially useful for large-scale data environments where millions of records must be processed daily.

Database indexing is also essential for ETL process optimization. Proper indexing improves query speed and allows faster data retrieval. Without indexing, ETL jobs may take much longer to complete.

Data partitioning is another useful strategy. Partitioning can reduce server load and improve overall ETL performance.

Automation in ETL Optimization

Automation plays a major role in modern ETL process optimization. Automated ETL tools reduce manual work, minimize human errors, and improve consistency. Businesses can schedule workflows to run automatically during off-peak hours, reducing system strain during busy periods.

Automation also improves monitoring and error handling. Modern ETL platforms can detect failures, send alerts, and restart processes automatically. This reduces downtime and ensures reliable data delivery.

Cloud-based ETL solutions are becoming increasingly popular because they provide scalability and flexibility. Cloud platforms allow businesses to process large datasets without investing heavily in on-premise infrastructure.

Role of Data Quality in ETL Performance

Data quality management is a critical part of ETL optimization. Poor-quality data can lead to inaccurate reports, failed workflows, and unreliable analytics. Businesses should validate and clean data before loading it into storage systems.

Standardizing data formats improves consistency across systems. Removing duplicate records and correcting missing values also enhances ETL efficiency. Clean data not only improves reporting accuracy but also reduces transformation complexity.

ETL Optimization for Big Data Environments

Traditional ETL methods may struggle to handle real-time analytics and high-speed data streams.

Distributed processing frameworks like Apache Spark and Hadoop are commonly used for ETL optimization in big data systems. These technologies allow organizations to process data across multiple servers simultaneously, improving speed and scalability.

Choosing the Right ETL Tools

Selecting the right ETL software is important for long-term optimization. Modern ETL platforms provide features such as automation, monitoring, scalability, and cloud integration. Popular ETL tools include Talend, Informatica, Microsoft SSIS, and Apache NiFi.

Businesses should choose ETL solutions based on their data volume, performance requirements, and integration needs. A flexible and scalable ETL tool can simplify optimization efforts and improve workflow reliability.

Future Trends in ETL Process Optimization

The future of ETL process optimization is closely connected to artificial intelligence and cloud computing. AI-powered ETL tools can automatically detect bottlenecks, optimize workflows, and improve data quality. Machine learning algorithms can also predict failures before they occur.

Conclusion

ETL process optimization is essential for businesses that rely on data-driven decision-making. Optimized ETL workflows improve speed, reduce costs, enhance scalability, and ensure high-quality data management. By using techniques such as incremental loading, automation, parallel processing, and cloud integration, organizations can create efficient and reliable data pipelines.